Tag: cloud computing

-

Cloud and AI: Powering a New Era of Innovation in the Utility Sector

The utility sector is transforming in response to climate change, shifting from centralized to decentralized, renewable energy models. Cloud computing and AI are essential for managing complexities such as real-time data processing, predictive maintenance, and enhancing cybersecurity. Embracing innovation is vital for sustainability, efficiency, and addressing regulatory and cultural challenges.

-

Digital Supply Chain, Industry 4.0, and IoT/Edge Computing – a chat with Elvira Wallis (aka @ElviraWallis)

On this second Digital Supply Chain podcast on the theme of Industry 4.0, I had a great chat with Elvira Wallis (@ElviraWallis on Twitter and Elvira Wallis on LinkedIn). Elvira is the Global Head of IoT at SAP, so obviously I was keen to find out her take on how Digital Supply Chain, IoT and…

-

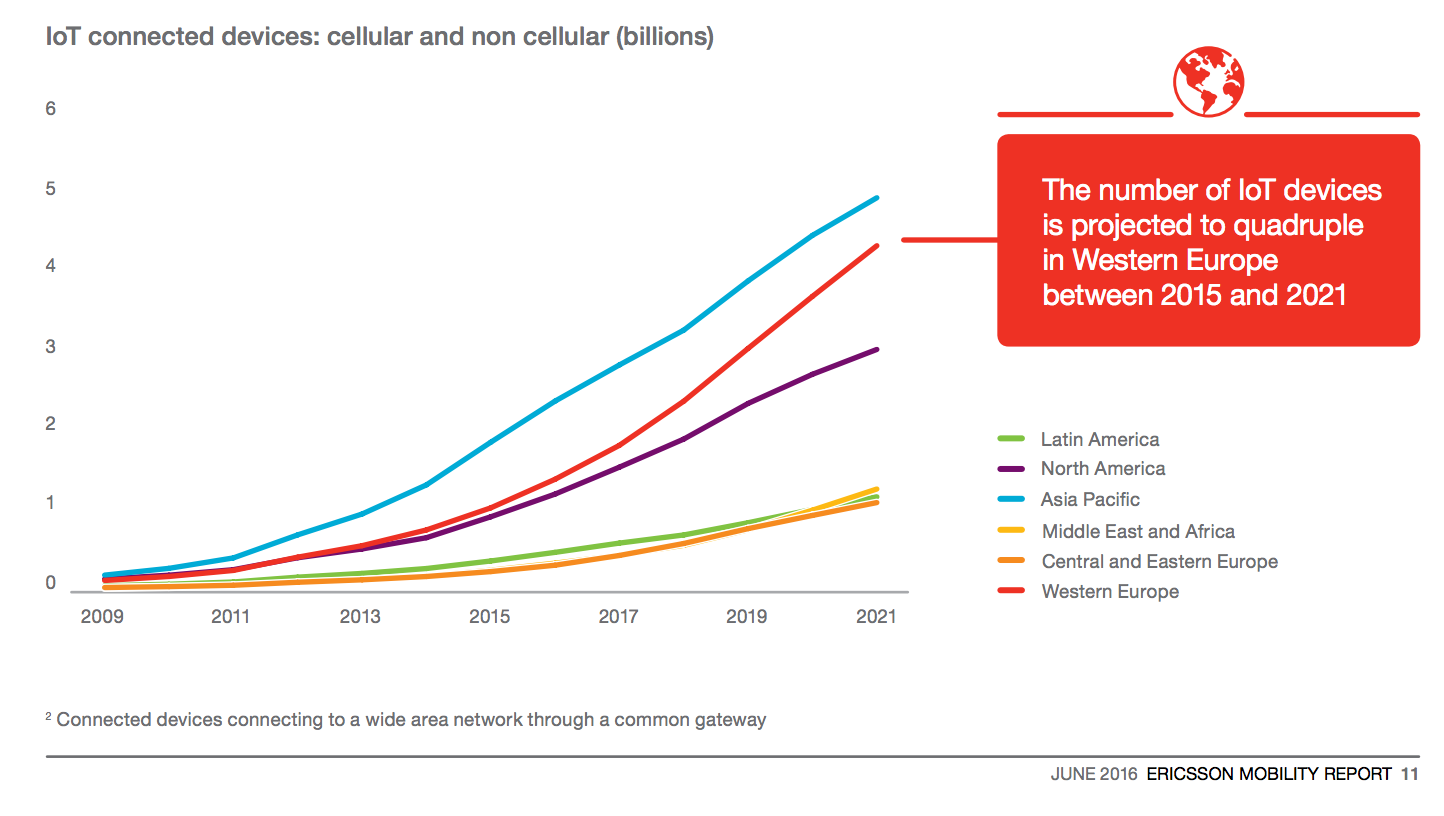

The Internet of Things – trends for the telecoms, data centre, and utility industries

I gave the closing keynote at an event in Orlando last week on the topic of The Impact of the Internet of Things on Telcos, Data Centres, and Utilities. The slides by themselves can be a little hard to grok, so I’ll go through them below. I should note at the outset that while many…

-

IBM acquires Weather.com for Cloud, AI (aaS), and IoT

IBM has announced the completion of the acquisition The Weather Company’s B2B, mobile and cloud-based web-properties, weather.com, Weather Underground, The Weather Company brand and WSI, its global business-to-business brand. At first blush this may not seem like an obvious pairing, but the Weather Company’s products are not just their free apps for your smartphone, they…

-

Equinix rolls out 1MW fuel cell for Silicon Valley data center

Equinix is powering one of its Silicon Valley data centers with a 1MW Bloom Energy fuel cell

-

Lack of emissions reporting from (some) cloud providers is a supply chain risk

We at GreenMonk spoke to Robert Francisco, President North America of FirstCarbon Solutions, last week. FirstCarbon solutions is an environmental sustainability company and the exclusive scoring partner of CDP‘s (formerly the Carbon Disclosure Project), supply chain program. Robert pointed out on the call that there is a seed change happening and that interest in disclosure…

-

Cloud computing meets supply chain transparency and risk

Supply chains? Yawn, right? While supply chains may seem boring, they are of vital importance to organisations, and their proper management can make, or break companies. Some recent examples of where poorly managed supply chains caused at best, serious reputational damage for companies include the Apple Computers child labour and workers suicide debacle; the Tesco…

-

Cloud computing companies ranked by their use of renewable energy

Cloud computing is booming. Cloud providers are investing billions in infrastructure to build out their data centers, but just how clean is cloud? Given that this is the week that the IPCC’s 5th assessment report was released, I decided to do some research of my own into cloud providers. The table above is a list…